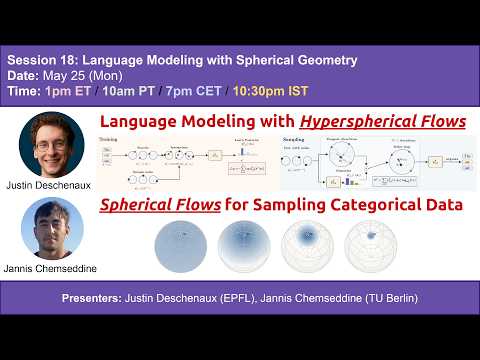

S18 | Language Modeling with Spherical Geometry

Justin Deschenaux (EPFL) and Jannis Chemseddine (TU Berlin) present their recent works on hyperspherical language modeling. By lifting tokens onto the sphere, they define language flows along SLERP and vMF paths. The vMF path admits a closed-form score, Hyperspherical flows improve code generation over prior flow language models, and at matched NFE, the PC sampler with vMF paths improves accuracy on Sudoku.

Discrete diffusion samples from a factorized distribution that is strictly less expressive than autoregressive models, while Flow Language Models (FLMs) avoid factorized sampling but add Gaussian noise on one-hot vectors, a noise process with no clear semantic interpretation for text. Both papers explore the sphere as a natural geometry for language flows. By lifting tokens onto the hypersphere, the authors develop spherical language modeling via SLERP and vMF paths. The vMF path has a closed-form conditional score, enabling predictor-corrector samplers on the sphere. Training with rotations avoids materializing one-hot vectors, making it cheaper than standard FLM training. On TinyGSM (code generation), prior FLMs reach roughly 0% accuracy while hyperspherical flows reach 12-18%. At matched NFE, a PC sampler with vMF paths improves accuracy on Sudoku. Joint work with Caglar Gulcehre, Gregor Kornhardt, and Gabriele Steidl.

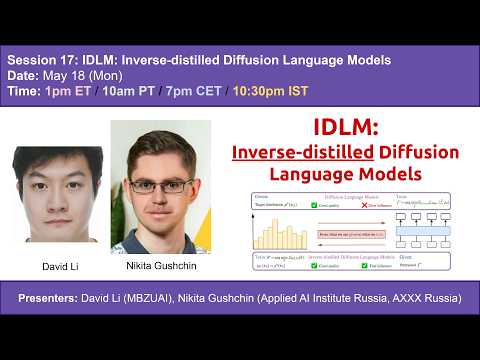

S17 | IDLM: Inverse-distilled Diffusion Language Models

IDLM extends Inverse Distillation to discrete diffusion language models, with a uniqueness theorem and gradient-stable relaxations that enable effective training, significantly reducing the number of inference steps while preserving the teacher model's entropy and generative perplexity.

Diffusion Language Models (DLMs) have recently achieved strong results in text generation, but their multi-step sampling makes inference slow and limits practical use. This work extends Inverse Distillation, a technique originally developed for accelerating continuous diffusion models, to the discrete setting. The extension raises two challenges: the inverse distillation objective lacks uniqueness guarantees, which can yield suboptimal solutions, and backpropagation through discrete sampling is non-trivial and often unstable. The authors first prove that their inverse formulation admits a unique solution, ensuring valid optimization, and then introduce gradient-stable relaxations that make training effective. Experiments across multiple DLMs show that IDLM significantly reduces the number of inference steps while preserving the teacher model's entropy and generative perplexity.

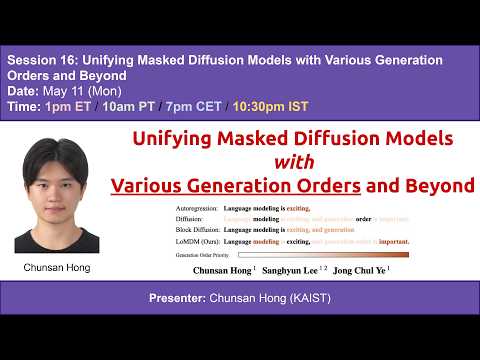

S16 | Unifying Masked Diffusion Models with Various Generation Orders and Beyond

Order-expressive masked diffusion (OeMDM) unifies masked diffusion, autoregressive, and block diffusion models in a single framework, and its extension LoMDM jointly learns the generation order and diffusion backbone end-to-end, outperforming prior discrete diffusion baselines on language modeling benchmarks.

Masked diffusion models (MDMs) are a promising alternative to autoregressive models for language generation, but their quality depends critically on the generation order. Prior work either hard-codes an ordering (e.g., blockwise left-to-right) or learns an ordering policy on top of a pretrained MDM, incurring extra cost and suboptimal two-stage optimization. This paper introduces the order-expressive masked diffusion model (OeMDM), a framework spanning a broad class of diffusion processes with various generation orders that subsumes MDMs, autoregressive models, and block diffusion as special cases. Building on OeMDM, the learnable-order masked diffusion model (LoMDM) jointly learns the generation ordering and the diffusion backbone from scratch with a single objective, enabling context-dependent token ordering at sampling time. Empirically, LoMDM outperforms a range of discrete diffusion baselines across multiple language modeling benchmarks.

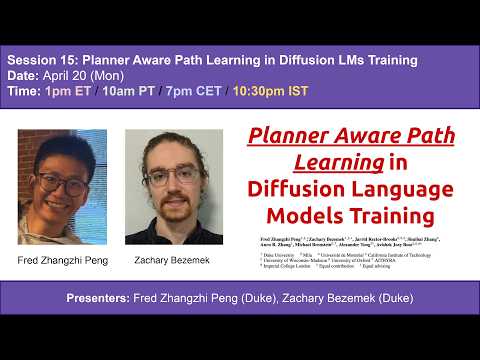

S15 | Planner Aware Path Learning in Diffusion Language Models Training

Fred Zhangzhi Peng presents Planner Aware Path Learning (PAPL), a simple modification to the masked diffusion loss that aligns training with planner-based inference, yielding large gains on protein modeling, text generation, and code.

In today's session, Fred Zhangzhi Peng presents a study of a fundamental mismatch in diffusion language models between training and planner-based inference. While planners enable faster, more flexible generation by selecting non-uniform denoising paths, standard training assumes uniformly random trajectories, leading to a misaligned objective. The talk introduces a theoretical analysis showing that the standard ELBO fails under planning, and proposes a new Planned ELBO (P-ELBO) along with Planner Aware Path Learning (PAPL), a simple modification to the masked diffusion loss that aligns training with inference. Empirically, PAPL yields strong gains across domains, including ~40% improvement on protein modeling, up to 4x MAUVE gains in text generation, and +23% on HumanEval pass@10 for code generation.

S14 | One-step Language Modeling via Continuous Denoising

Flow-based Language Models (FLMs) replace factorized ancestral sampling with sample-level continuous transport via flow matching, and can be distilled into a flow map language model that generates in as few as one step, matching 8-step discrete diffusion quality with an ~8.3× speedup.

Language models based on discrete diffusion have shown promise for parallel generation, but they suffer from factorization error that causes sharp quality degradation in the few-step regime. To overcome this, Flow-based Language Models (FLMs) move from factorized ancestral sampling to sample-level continuous transport via flow matching. FLMs are high-performing through principled design choices such as a decoding-error-based time reparameterization. To enable few-step generation, the paper introduces the two-time denoiser, a novel reparameterization of the flow map that provably lies on the probability simplex, allowing the authors to distill FLM into a flow map language model (FMLM) via cross-entropy. FMLM transports noise to data in as few as one step, outperforming recent few-step discrete diffusion models and matching their 8-step quality at one step with an approximately 8.3× speedup.

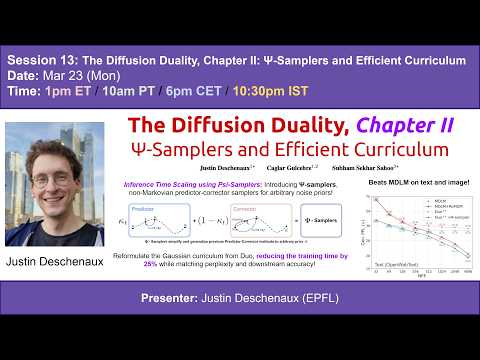

S13 | The Diffusion Duality, Chapter II: Ψ-Samplers and Efficient Curriculum

Justin Deschenaux presents a family of Predictor-Corrector samplers for discrete diffusion models that generalize prior approaches to arbitrary noise processes and, unlike conventional methods, continue to improve as the number of sampling steps increases.

In today's session, Justin Deschenaux presents The Diffusion Duality, Chapter II: Ψ-Samplers and Efficient Curriculum. This work introduces a family of Predictor-Corrector (PC) samplers for discrete diffusion models. The method generalizes prior approaches to arbitrary noise processes and, when combined with uniform-state diffusion, overcomes the limitations of ancestral samplers. Unlike conventional methods, these samplers continue to improve as the number of sampling steps increases. The framework is evaluated on language and image modeling tasks, achieving lower perplexity on OpenWebText and improved FID/IS scores on CIFAR-10. Additionally, the work introduces a memory-efficient training curriculum that reduces training time and memory usage while maintaining strong performance.

S12 | Discrete Feynman-Kac Correctors

Mohsin Hasan and Viktor Ohanesian present Discrete Feynman-Kac Correctors, a framework for controlling discrete diffusion sampling at inference time using Sequential Monte Carlo, enabling temperature control and reward-guided generation without retraining.

In today's session, Mohsin Hasan and Viktor Ohanesian present their recent work on Discrete Feynman-Kac Correctors, a framework for controlling the sampling distribution of discrete diffusion models at inference time. The method uses Sequential Monte Carlo (SMC) to enable temperature control (annealing), combine multiple diffusion processes, and incorporate external reward functions, without retraining or fine tuning the original model. The framework is demonstrated on applications including Ising model sampling, improved code generation, and reward guided protein sequence generation.

S11 | CANDI: Hybrid Discrete-Continuous Diffusion Models

Continuous diffusion dominates images and LLMs use continuous embeddings, yet discrete diffusion still wins for language. CANDI explains this via "temporal dissonance" and fixes it by keeping some tokens clean as anchors while corrupting the rest with Gaussian noise.

Continuous diffusion dominates image generation. LLMs process text through continuous embeddings. So why does discrete diffusion still win for language? CANDI explains why, it's a "temporal dissonance": at large vocabulary sizes, Gaussian noise destroys token identity way before it meaningfully degrades the continuous signal. The model can either learn discrete conditional structure or continuous geometry, but not both simultaneously. The fix? Keep some tokens clean as anchors for discrete structure, corrupt the rest with Gaussian noise. Decoupling the two lets the model learn both simultaneously, enabling off-the-shelf classifier guidance and better low-NFE generation.

S10 | Reasoning with Latent Tokens in Diffusion Language Models

Andre He (LTI @ CMU) presents why latent tokens in diffusion language models enable planning and lookahead, and how similar multi-token prediction objectives improve autoregressive reasoning.

In today's session, Andre He (LTI @ CMU) presented his paper Reasoning with Latent Tokens in Diffusion Language Models, exploring why diffusion language models outperform autoregressive (AR) models on synthetic reasoning tasks. Andre argues that diffusion models naturally maintain "latent tokens" (as joint predictions over undecoded positions) which enable planning and lookahead, and demonstrated that modulating these latent tokens creates a smooth tradeoff between inference speed and output quality. Andre further showed that introducing a similar multi-token prediction objective into AR models significantly improves their reasoning performance, suggesting latent tokens as a general mechanism for enhancing global coherence.

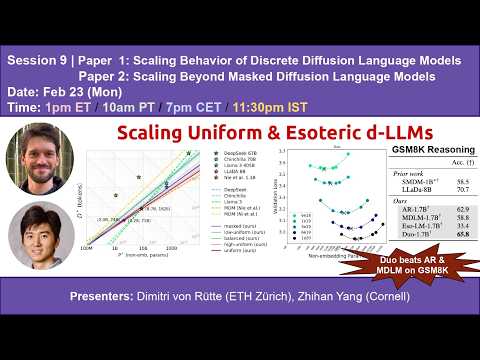

S9 | Scaling Discrete Diffusion Language Models

Dimitri von Rütte (ETH) and Zhihan Yang (Cornell) present two papers on scaling laws of discrete diffusion LLMs that challenge the dominance of Masked Diffusion.

Dimitri von Rütte (ETH) and Zhihan Yang (Cornell) present "Scaling Behavior of Discrete Diffusion Language Models" (https://arxiv.org/abs/2512.10858) and "Scaling Beyond Masked Diffusion Language Models" (https://www.arxiv.org/abs/2602.15014), two recent papers presenting systematic scaling laws of uniform-state and hybrid discrete diffusion LLMs. Importantly, both papers challenge the dominance of Masked Diffusion.

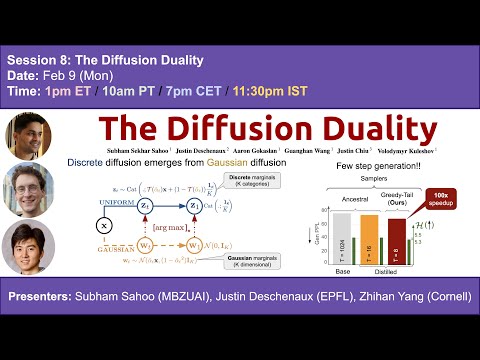

S8 | The Diffusion Duality

Today, Subham Sahoo (IFM), Justin Deschenaux (EPFL) and Zhihan Yang (Cornell) are presenting The Diffusion Duality (ICML 2025)

Uniform-state discrete diffusion models hold the promise of fast text generation due to their inherent ability to self-correct. However, they are typically outperformed by autoregressive models and masked diffusion models. In this work, we narrow this performance gap by leveraging a key insight: Uniform-state diffusion processes naturally emerge from an underlying Gaussian diffusion. Our method, Duo, transfers powerful techniques from Gaussian diffusion to improve both training and sampling. First, we introduce a curriculum learning strategy guided by the Gaussian process, doubling training speed by reducing variance. Models trained with curriculum learning surpass autoregressive models in zero-shot perplexity on 3 of 7 benchmarks. Second, we present Discrete Consistency Distillation, which adapts consistency distillation from the continuous to the discrete setting. This algorithm unlocks few-step generation in diffusion language models by accelerating sampling by two orders of magnitude.

S7 | Planned Diffusion

Daniel Israel and Tian Jin discuss Planned Diffusion. Planned diffusion speeds up text generation by planning with an autoregressive model and then generating multiple spans in parallel with diffusion while keeping quality nearly the same.

Daniel Israel and Tian Jin discuss planned diffusion, a hybrid text generation method where a language model first creates a short autoregressive "plan" that splits output into independent spans, then generates those spans in parallel with diffusion, achieving significantly faster generation while maintaining near-autoregressive quality.

S6 | TiDAR: Think in Diffusion, Talk in Autoregression

Jingyu Liu will discuss TiDAR, a hybrid decoding approach that combines diffusion-style parallel drafting with autoregressive verification for high quality and high throughput.

Jingyu Liu will discuss TiDAR, a hybrid decoding approach that combines diffusion-style parallel drafting with autoregressive verification for high quality and high throughput.

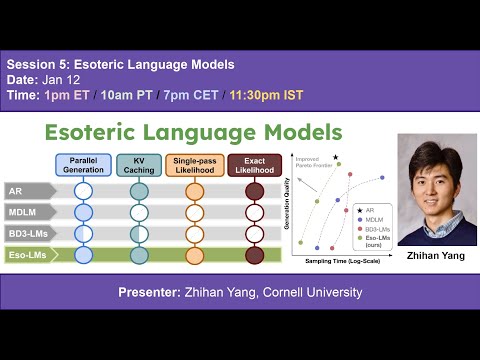

S5 | Esoteric Language Models

In this talk, Zhihan Yang presents Eso-LMs, which unifies AR and diffusion language models. Eso-LMs enable exact likelihoods and KV caching while preserving parallel generation.

In this session, Zhihan Yang presents Eso-LMs, a new family of language models that unifies autoregressive and diffusion-based generation. The talk reframes masked diffusion models as any-order autoregressive models and shows how a causal attention design can overcome long-standing limitations of diffusion LMs. This perspective enables, for the first time, exact likelihood computation for masked diffusion models and introduces KV caching at inference time, while preserving their ability to generate tokens in parallel. Combined with an optimized sampling schedule, Eso-LMs achieve a new state of the art on the speed-quality Pareto frontier for unconditional text generation, delivering 14 to 65x faster inference on long contexts compared to standard diffusion models and 3 to 4x faster inference than prior semi-autoregressive approaches. The presentation highlights both the theoretical insights behind the model design and the practical gains in inference efficiency.

S4 | DiffuCoder: Understanding and Improving Masked Diffusion Models for Code Generation

In this talk, Shansan Gong will present DiffuCoder and discuss how diffusion language models enable global planning and iterative refinement for code generation.

In this session, Shansan Gong presents DiffuCoder, a 7B diffusion large language model for code generation. The talk explores how diffusion LLMs differ from autoregressive models in decoding behavior, showing flexible causality and temperature controlled generation order, and introduces coupled-GRPO, a diffusion native RL method that improves code performance by +4.4% on EvalPlus while reducing AR bias.

S3 | OneFlow: Concurrent Mixed-Modal and Interleaved Generation with Edit Flows

In this talk, John Nguyen presents OneFlow, a non-autoregressive multimodal model for concurrent text and image generation.

In our third session, John Nguyen presents OneFlow, a non-autoregressive multimodal model for concurrent text and image generation. OneFlow combines insertion-based edits (EditFlow) with flow matching for images, outperforming autoregressive and diffusion baselines while using up to 50% fewer training FLOPs.

S2 | Peptune: De Novo Generation of Therapeutic Peptides with Guided Discrete Diffusion

In this talk, Sophia Tang shows how discrete diffusion enables more controllable and efficient molecule generation.

Discrete diffusion models provide much greater control over the generation process, making them a strong alternative to autoregressive models. In this talk, Sophia Tang (University of Pennsylvania) presents her paper, "PepTune: De Novo Generation of Therapeutic Peptides with Multi-Objective-Guided Discrete Diffusion," explaining why and showing how discrete diffusion enables more controllable and efficient molecule generation.

S1 | Diffusion Language Models beat AR in data constrained regime

In this talk, Mihir Prabhudesai shows that diffusion LLMs excel in such settings by extracting more information from limited data.

The secret behind the success of current LLMs lies in the massive amount of data they are trained on. However, as these models continue to scale, we are rapidly approaching a data-constrained regime--where models demand more data than is available; after all, we only have one internet. In this talk, Mihir Prabhudesai shows that diffusion LLMs excel in such settings by extracting more information from limited data.