How did diffusion LLMs get so fast?

Techniques for accelerating diffusion LLMs, from self-distillation and curriculum learning to KV caching and block diffusion

This video discusses techniques for making diffusion LLMs faster, including self-distillation through time, curriculum learning, confidence scores for unmasking, guided diffusion (FlashDLM), approximate KV caching (dLLM-Cache, dKV-Cache), and block diffusion.

Transformers & Diffusion LLMs: What's the connection?

How Transformers evolved from machine translation to powering diffusion-based LLMs like LLaDA

Diffusion-based LLMs are a new paradigm for text generation; they progressively refine gibberish into a coherent response. But what's their connection to Transformers? This video unpacks how Transformers evolved from a simple machine translation tool into the universal backbone of modern AI — powering everything from auto-regressive models like GPT to diffusion-based models like LLaDA. Topics covered include how the Transformer architecture actually works (encoder, decoder, attention), why attention replaced recurrence in natural language processing, how GPT training differs from diffusion-based text generation, how BERT's masked language modeling inspired diffusion LLMs, and a concrete walkthrough of LLaDA's masked diffusion process.

Text diffusion: A new paradigm for LLMs

Overview of diffusion-based LLMs as an alternative to autoregressive models, covering D3PM and LLaDA

Text diffusion is a new paradigm for LLMs. As opposed to mainstream auto-regressive models like GPT, Claude or Gemini (which predict one token at a time), diffusion-based LLMs draft an entire response and refine it progressively. This leads to 10x faster inference. Models like Gemini Diffusion, Mercury Coder from Inception Labs and Seed Diffusion from ByteDance are already competitive on coding benchmarks. Inspired by physical diffusion, such models make use of Markov chains to model data generation as a particle hopping through discrete states. We'll walk through the D3PM and LLaDA papers as case studies.

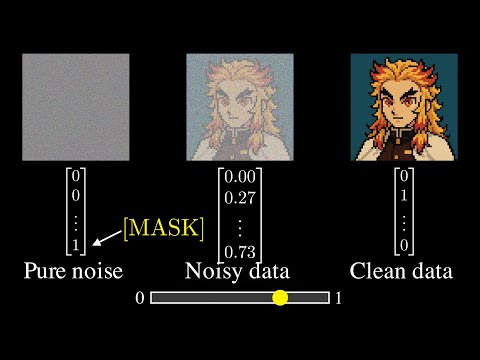

But How Do Diffusion Language Models Actually Work?

Jia-Bin Huang explores several ideas for applying diffusion models to language modeling

Most Large Language Models (LLMs) today are based on Autoregressive models (i.e., they predict texts in a left-to-right order). But diffusion models offer iterative refinement, flexible control, and faster sampling. In this video, we explore several ideas for applying diffusion models to language modeling.

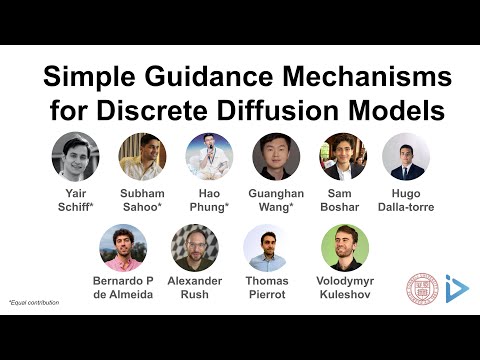

Simple Guidance Mechanisms for Discrete Diffusion Models

Simple Guidance Mechanisms for Discrete Diffusion Models (ICLR 2025 video)

Diffusion models for continuous data gained widespread adoption owing to their high quality generation and control mechanisms. However, controllable diffusion on discrete data faces challenges given that continuous guidance methods do not directly apply to discrete diffusion. Here, we provide a straightforward derivation of classifier-free and classifier-based guidance for discrete diffusion, as well as a new class of diffusion models that leverage uniform noise and that are more guidable because they can continuously edit their outputs. We improve the quality of these models with a novel continuous-time variational lower bound that yields state-of-the-art performance, especially in settings involving guidance or fast generation. Empirically, we demonstrate that our guidance mechanisms combined with uniform noise diffusion improve controllable generation relative to autoregressive and diffusion baselines on several discrete data domains, including genomic sequences, small molecule design, and discretized image generation.

Simple Diffusion Language Models

Quick introduction to Masked Diffusion Language Models (MDLM) by Alexander Rush

Quick introduction to Masked Diffusion Language Models (MDLM) by Alexander Rush